|

With so many levels of indexation, it’s easy to see how a URL can be technically indexed but have little to no visibility in the SERPs.Īdditionally, this graphic makes it clear that indexation is too complex to take a single metric on # of pages indexed at face value instead, you’ll want to keep an eye over multiple data points regularly. Moz.com/blog/crawling-indexing-its-not-as-simple-as-just-in-or-out there are different levels of indexation.Īgain, Rand has already illustrated that last point well:.a page is not guaranteed visibility just because it is in the index, and.# of page receiving at least one visit from search engine x is different from # of pages indexed because: After all, that’s what all this obsession about content being crawl-able, pages being indexed, and index bloat is all about – making sure that pages that deserve search engine traffic have the opportunity to get it, and making sure that pages that don’t deserve the opportunity aren’t getting traffic. In that article, Rand explains the importance of looking at the number of pages receiving organic search engine traffic. Not Paying Attention to Which Pages Get Traffic There’s simply better metrics than site search. SEO legend Rand Fishkin does a great job explaining how terrible this data is in Indexation for SEO: Real Numbers in 5 Easy Steps.

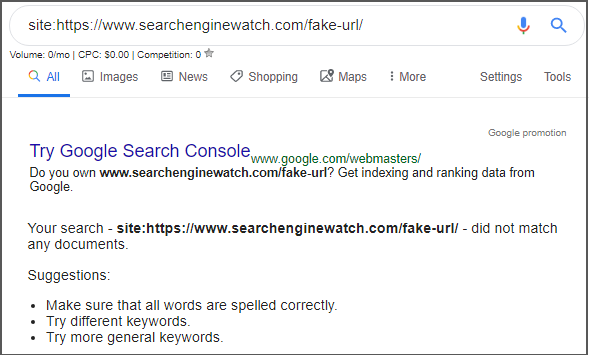

Unfortunately, its notoriously inaccurate. The “site: search” is a common way people check on the number of pages a search engine indexed for their site. Later on, we’ll get into how to uncover these issues using Webmaster Tools.

Ensuring the search engines are crawling the content that matters in a timely manner can have a solid impact on SEO. There’s things you can do to mitigate these issues (which often reveal deeper problems with your site), but its a common mistake to not monitor for these issues at all.

This can occur if you have accidentally prevented the page from being crawled OR if the search engines are over-crawling other pages.Ĭrawling too many URLs: One of the reasons important pages won’t get crawled as frequently as you want (or at all) is if the search engines are too busy crawling pages that are unimportant or not crawl-worthy.Ĭrawl errors: When the search engines have a problem downloading a specific URL. There are basically three kinds of crawl issues.Ĭrawling too few URLs: When pages you want to be crawled are not crawled frequently enough or at all, eliminating them or their newest content from eligibility for indexation and inclusion in the SERPs. Not Monitoring the CrawlĪnother thing we learn in SEO 101 is that before the search engines can even index a page, they must crawl the page. As in duplicate content, if your site has index bloat, the consequent dissipation of link juice and constrained crawl budget can have a significant impact on SEO traffic. If more pages are indexed then you the number of pages you want to be search engine landing pages, then you may have index bloat. Over-indexation is when pages you don’t want indexed are indexed, leading to private pages being public or duplicate content. This especially occurs if the pages are new or infrequently linked to (such as pages deep inside your site) and means you could be missing out on traffic. Under-indexation is when pages you want traffic to are not getting indexed. Monitoring indexation helps discover two important issues – under-indexation and over-indexation. Naturally, a common mistake we see is total failure to monitor indexation at all.

For a given page to get any traffic via search engines, it must be in the index, so it makes sense to make sure that the pages you want to get traffic to are indexed.įurther, it makes sense to ensure that pages you don’t want indexed are not indexed. In SEO 101, we learn that the search engines build an index that contains all pages which might be eligible for display in the search engine results pages (SERPs).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed